Midterm - Digital ocean

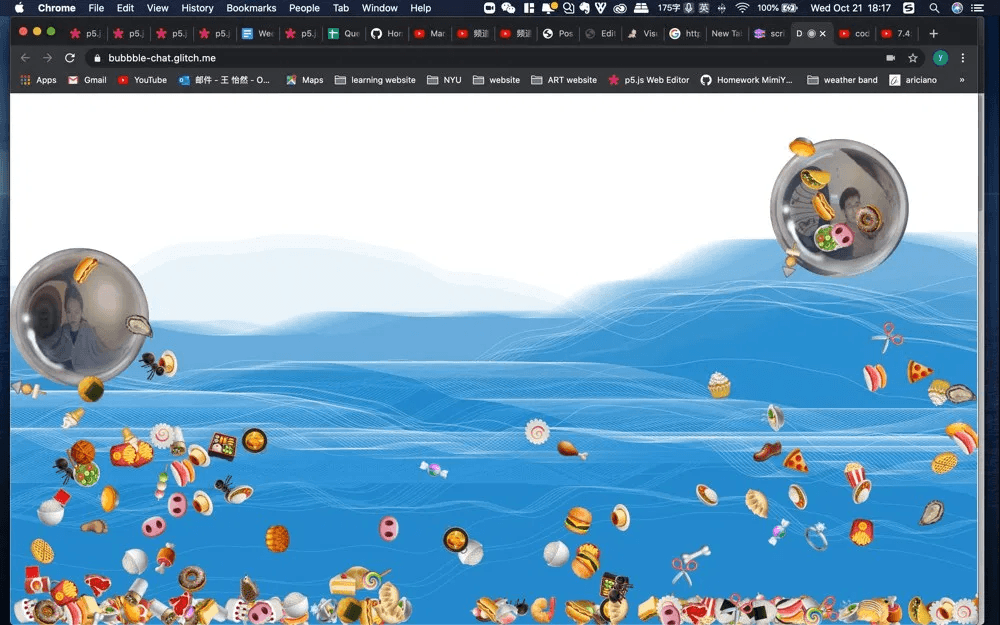

A collaboration with Marcel for the midterm project. We made a bubble video chat world with several interesting features.

- Animating background that follow your mouse.

- Move your head to move your bubble. The algorithm tracks your nose to calculate the moving direction and speed.

- Open your mouth to start rotating and vomit emojis.

- overlap with other people’s bubbles to make funny distortion effects.

- Try it on Glitch.me

- Source Code on GitHub

Background

Thanks Lea for sharing her p5 code. This part was included by p5 instance mode so it won’t affect global variables.

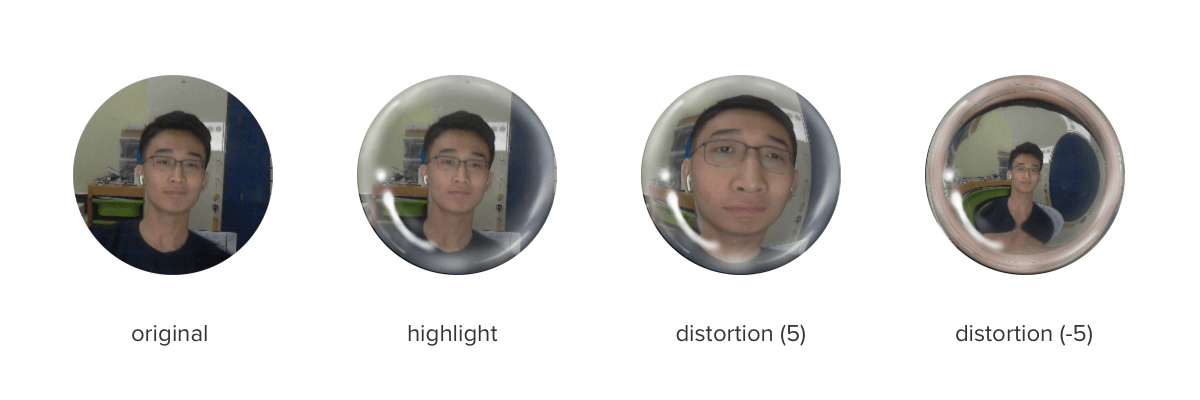

Fisheye effect

To make a bubble look like a real one, we did two things: adding a highlight layer and a fisheye distortion as well. Fisheye.js was utilized in this project to create the effect. It draws video or image on canvas with distortion using WebGL.

Face landmark detection

Landmark detection by face-api.js. There is a very simple and useful youtube tutorial.

The algorithm tracks three points - the nose and the center of both lips. The position of the nose decides the speed and direction the bubble move. And the mouth-open will be detect by calculating the ratio of the distance between upper lip and lower lip and the distance between nose and lower lip.

// got face landmarks

const marks = detections[0].landmarks.positions;

const nose = marks[30];

const upperLip = marks[62];

const lowerLip = marks[66];

const ulDistance = Math.sqrt(

Math.pow(upperLip.x - lowerLip.x, 2) +

Math.pow(upperLip.y - lowerLip.y, 2)

);

const nlDistance = Math.sqrt(

Math.pow(nose.x - lowerLip.x, 2) + Math.pow(nose.y - lowerLip.y, 2)

);

// mouth open

if (ulDistance / nlDistance > 0.3)Peer2peer

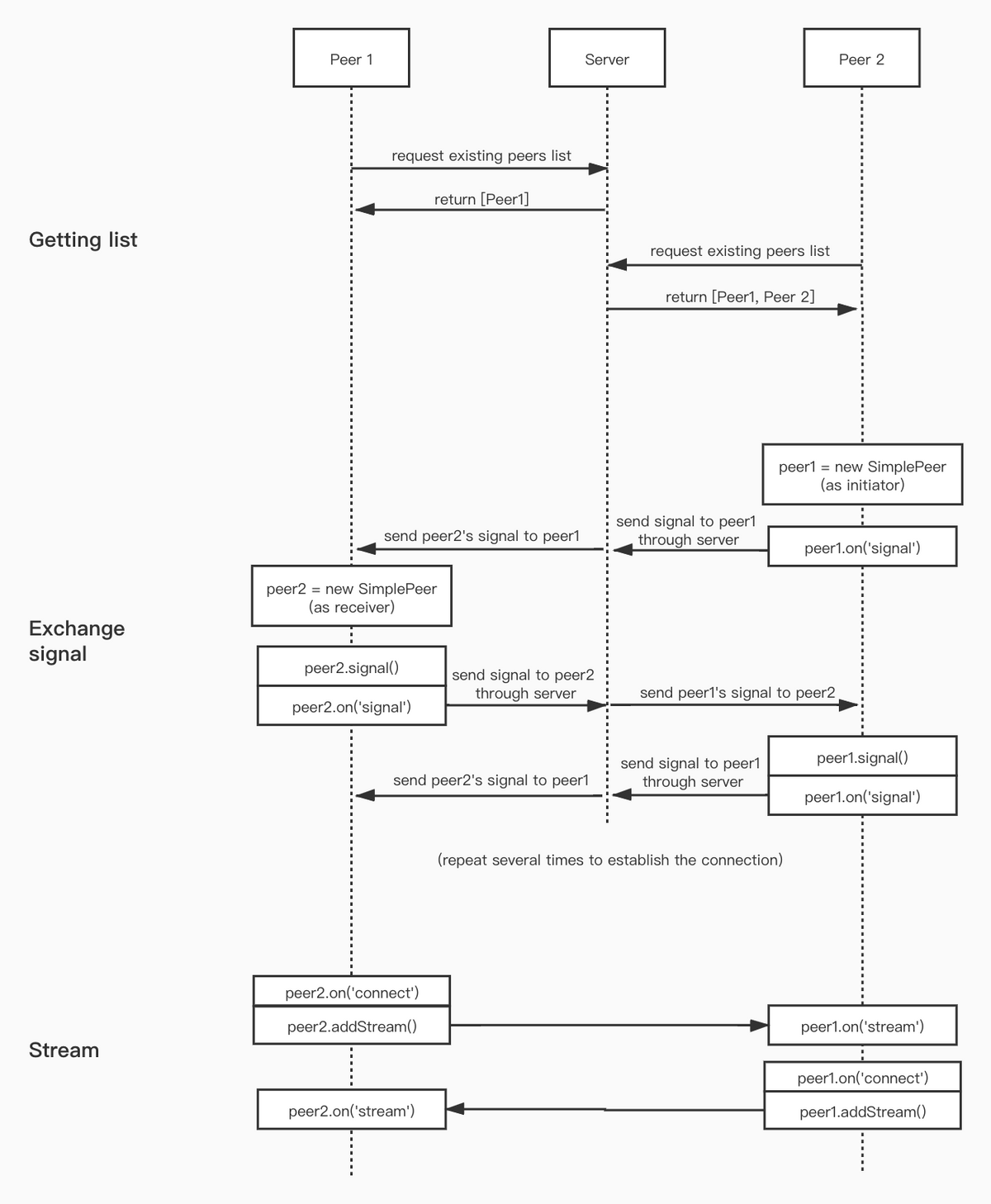

For better performance, we include SimplePeer to stream video through p2p network. It’s kinda tricky to use since it’s completely different from the client-server model. Sometimes it just returns ‘connection failed’ with no context which makes it really hard to debug. I have no clue whether the problem was at the ISP, router, proxy setting, or browser.

We also found that the order how we connected to server can affect the result. There was a much higher possibility to work if I connect later instead of first. Here is a graph how simplepeers work as far I know. I devide the process into three stages - getting list, exchange signal, and stream.